vPAC: Protection was only the first step – expanding to grid analytics, optimisation, and real-time control at the edge

vPAC has proven protection can run reliably as software in substations. The next phase expands that edge platform into analytics, automation, optimisation and real-time grid control.

Introduction: Why Virtualise? What changes?

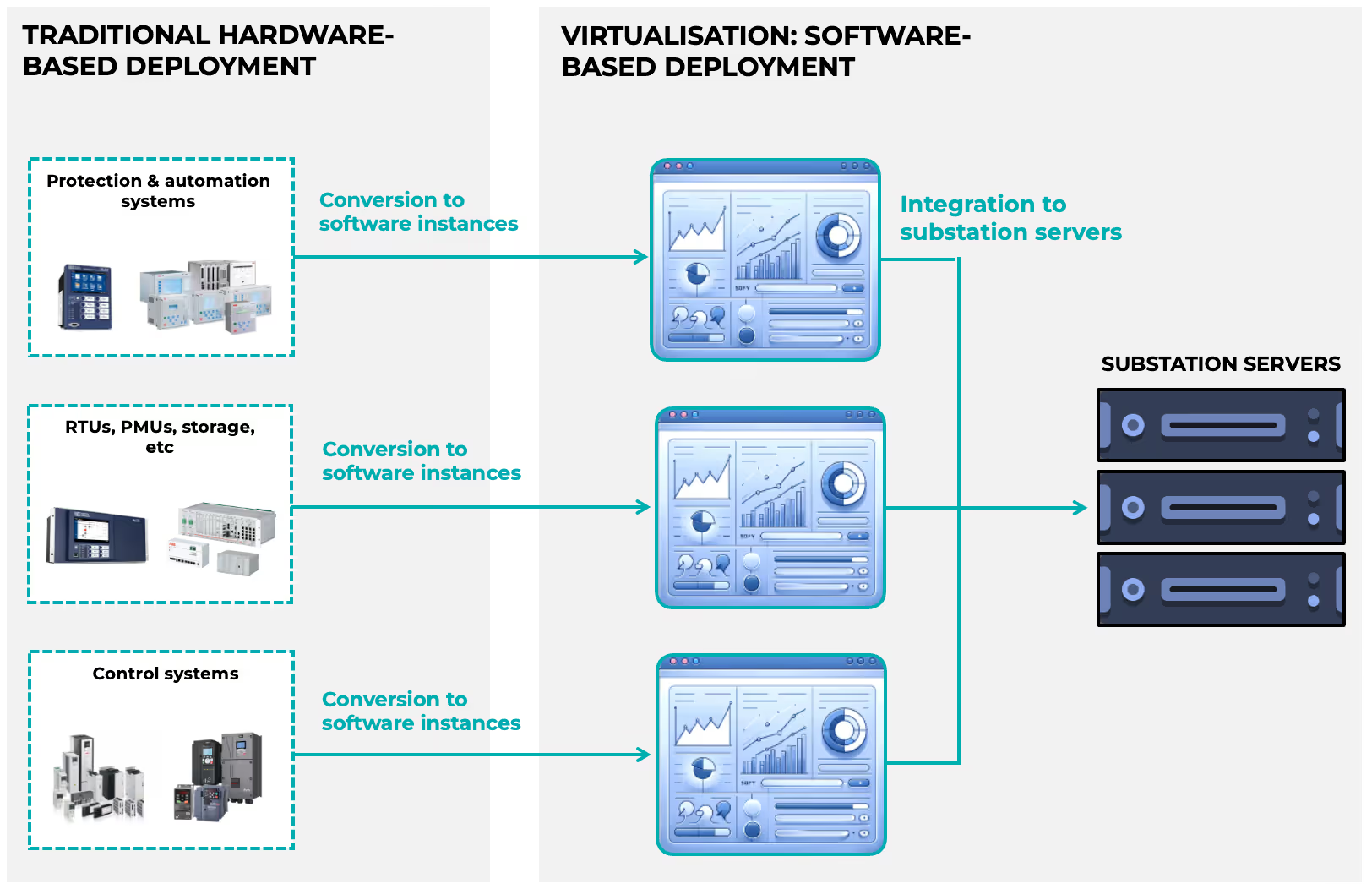

Virtualisation in power systems represents the transformation of traditionally hardware-bound functions—protection relays, controllers, RTUs,—into software instances running on shared, high-performance substation servers. As illustrated in Figure 1, this shift converts discrete, device-specific hardware into virtualised applications deployed on common computing infrastructure. Instead of installing new physical IEDs for every new functionality, utilities can now deploy software-defined protection, automation, analytics, and control applications as virtual machines (VMs) or containers. The physical footprint reduces, procurement cycles shorten, and functional expansion becomes a matter of software deployment rather than hardware integration.

But what truly changes is not only where applications run—it is how the grid evolves. Virtualisation introduces scalability, lifecycle flexibility, and multi-vendor interoperability at a structural level. Functions can be updated remotely, scaled dynamically, and integrated across layers without replacing field equipment. Resource allocation becomes programmable. Resilience improves through redundancy and live migration. Most importantly, the substation transforms from a static assembly of devices into a dynamic edge computing platform capable of hosting advanced analytics, optimisation algorithms, and real-time control applications. Protection was the logical first step in this journey. However, once the platform exists, the opportunity expands far beyond protection itself.

What has the industry done so far?

Over the past decade, virtualisation has moved from concept validation to structured industrial adoption, driven by coordinated efforts across alliances, vendors, infrastructure providers, and forward-looking utilities.

Industry Alignment - The Rise of the vPAC Ecosystem:

Virtualisation in power systems is no longer theoretical. Over the past five years, the industry has moved decisively from pilot experimentation to structured industrial initiatives. At the centre of this transition stands the vPAC Alliance, a global consortium established to accelerate the adoption of standards-based, interoperable, and secure architectures for virtualised Protection, Automation and Control (PAC). The Alliance has played a critical role in aligning utilities, vendors, and infrastructure providers around reference architectures and open standards—particularly IEC 61850-based digital substations. This collaborative momentum has validated the concept that protection functions can run reliably in virtualised environments, marking the first wave of software-defined substations.

Application Vendors - Softwarised Protection Becomes Reality:

On the application side, major OEMs have already introduced softwarised protection platforms. ABB has introduced its SSC600 smart substation control and protection solution, alongside broader virtualisation initiatives supported by industry partners such as Intel (see: Virtual Protection Relay). Siemens has launched SIPROTEC V, a fully virtualised protection and automation platform designed to run as software on standardised hardware environments (see: SIPROTEC V). These developments confirm that protection relays—traditionally hardware-embedded—can now operate as deterministic software instances.

Infrastructure Layer - The Emergence of a Virtualisation Stack:

Beneath applications lies a rapidly maturing infrastructure ecosystem. The Linux Foundation Energy’s SEAPATH project has emerged as a reference real-time hypervisor platform for substation virtualisation, providing deterministic performance, CPU isolation, and hardware passthrough capabilities tailored for PAC environments. Enterprise-grade software providers such as Red Hat are enabling hardened operating systems and orchestration layers suitable for mission-critical OT deployments. On the hardware side, substation-ready compute platforms from Dell, Advantech, and Welotec are being adopted to host these virtualised workloads, offering ruggedised, cyber-secure, and high-availability server infrastructure specifically designed for grid environments. Together, these components form the emerging “virtualisation stack” for digital substations: industrial hardware, hardened operating systems, real-time hypervisors, and softwarised protection applications.

Utility Adoption - From Pilots to Structured Programmes:

Utilities are no longer observing from the sidelines. UK Power Networks’ Constellation project has actively explored virtualisation technologies to free network capacity and accelerate DER integration. RTE has publicly documented its journey toward industrial deployment of fully digital PAC systems, demonstrating that virtualised protection is transitioning from laboratory validation to operational rollout (see: Towards an Industrial Deployment of Fully Digital PACS at RTE). In the United States, Salt River Project (SRP) has also engaged in virtualisation and digital substation initiatives, signalling growing North American momentum. These projects demonstrate that the first step—virtualising protection—has moved beyond concept validation into structured deployment programmes. However, while the industry has made significant progress in softwarising protection, most implementations remain centred on replicating existing hardware functionality in a virtual environment. The platform now exists.

The question is no longer whether protection can be virtualised. The question is: what else should run on that same edge infrastructure?

What does the future hold?

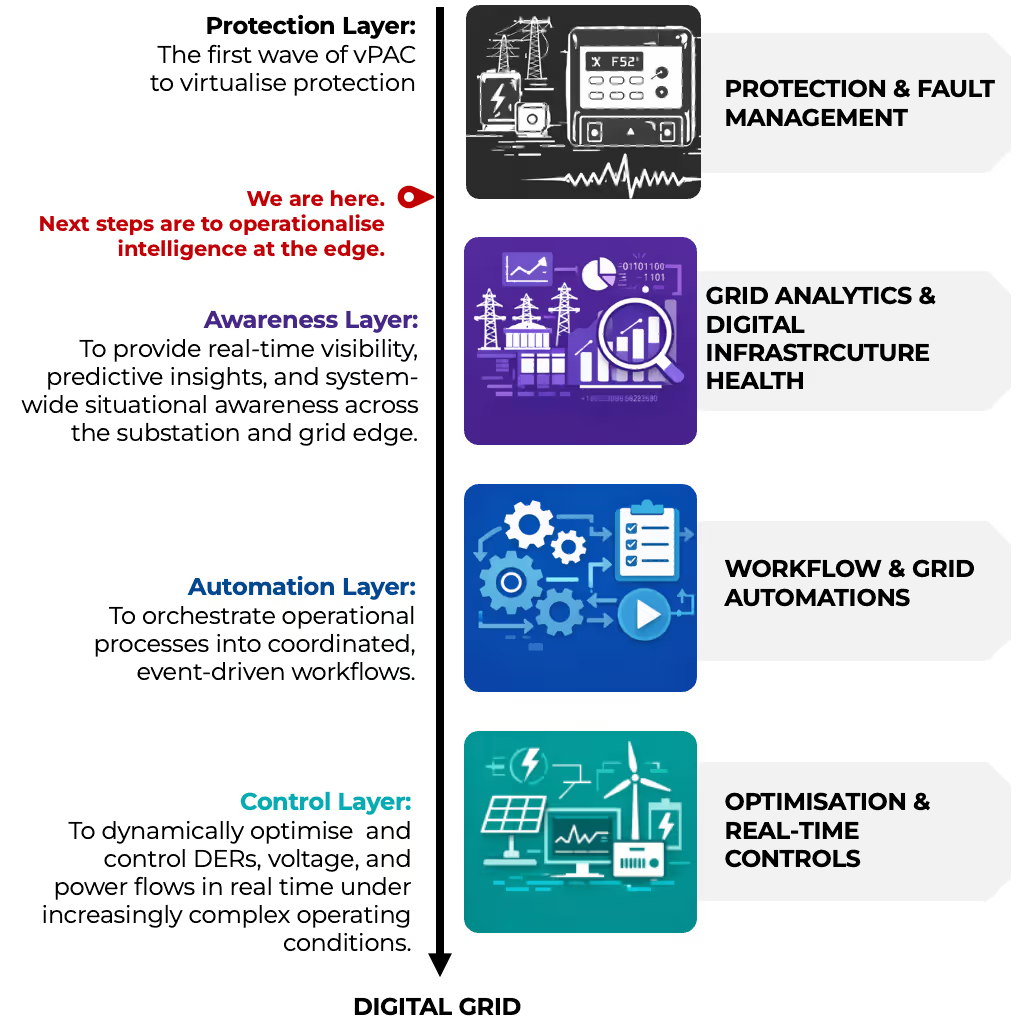

In the previous section, we analysed how the industry has successfully virtualised mostly protection and fault management functions. As illustrated in Figure 2, we are now at the point marked “We Are Here.” The foundational layer—virtualised protection and fault analytics—is established.

The future, therefore, is not about re-validating protection in software. It is about expanding the mission of that same edge infrastructure. Once a deterministic, cyber-secure, high-availability computing platform exists inside the substation, the opportunity extends far beyond traditional relay functionality.

To put things into perspective: The edge computing platform exists - the infrastructure stack is in place - the hypervisors are validated - the hardware is industrialised. In other words, the feasibility debate is over. The next logical layers, also depicted in Figure 2, are:

- Grid & Substation Analytics (Awareness Layer) transforms the substation from a passive measurement point into an intelligent edge node capable of contextualising and interpreting real-time grid behaviour. By processing high-resolution telemetry from IEDs, DER controllers, and synchrophasor streams directly at the edge, this layer converts raw data into situational awareness—identifying voltage instability risks, congestion trends, hosting capacity margins, and digital asset health indicators before they escalate into operational events. Instead of reacting to faults alone, the system begins to anticipate and quantify system stress, providing predictive insight that enhances operational planning and real-time decision-making. A digital grid must first see and understand itself; awareness is therefore the first true expansion beyond protection.

- Workflow & Grid Automations (Automation Layer) builds upon this awareness by orchestrating coordinated, event-driven responses across protection, control, and operational domains. Rather than relying on manual intervention or fragmented command structures, automated workflows enable structured reactions to grid conditions—whether adjusting DER setpoints in response to voltage excursions, triggering congestion mitigation sequences, or coordinating switching operations during abnormal states. This layer integrates analytics with action, reducing response time and ensuring consistency across multivendor environments. The grid transitions from isolated device-level functionality to system-level coordination, where processes are executed deterministically and repeatedly, without operational friction.

- Optimisation & Real-Time Controls (Control Layer) represents the culmination of this evolution, where advanced algorithms actively shape grid conditions rather than merely responding to them. Running within deterministic virtualised environments at the substation edge, real-time optimisation engines can manage DER dispatch, voltage profiles, reactive power flows, congestion relief, frequency stabilisation, and island re-synchronisation with millisecond-level precision. This is where the platform moves beyond monitoring and orchestration into dynamic system control.

Only when awareness, automation, and optimisation operate together on the same virtualised edge infrastructure does the substation become a true digital node—and only then can the power system evolve into a truly digital grid, one that is adaptive, distributed, software-defined, and inherently intelligent.

Avancements by SMPnet

SMPnet has been advancing the next layers of virtualised grid intelligence—analytics, automation, optimisation, and real-time control—well beyond traditional approaches. These capabilities are not conceptual; they have been deployed, validated, and integrated within utility environments, including projects such as UK Power Networks’ Constellation initiative. Building on the proven virtualisation foundation discussed earlier, we present below a glimpse of the architectural and operational advancements that enable scalable, industrial-grade edge intelligence.

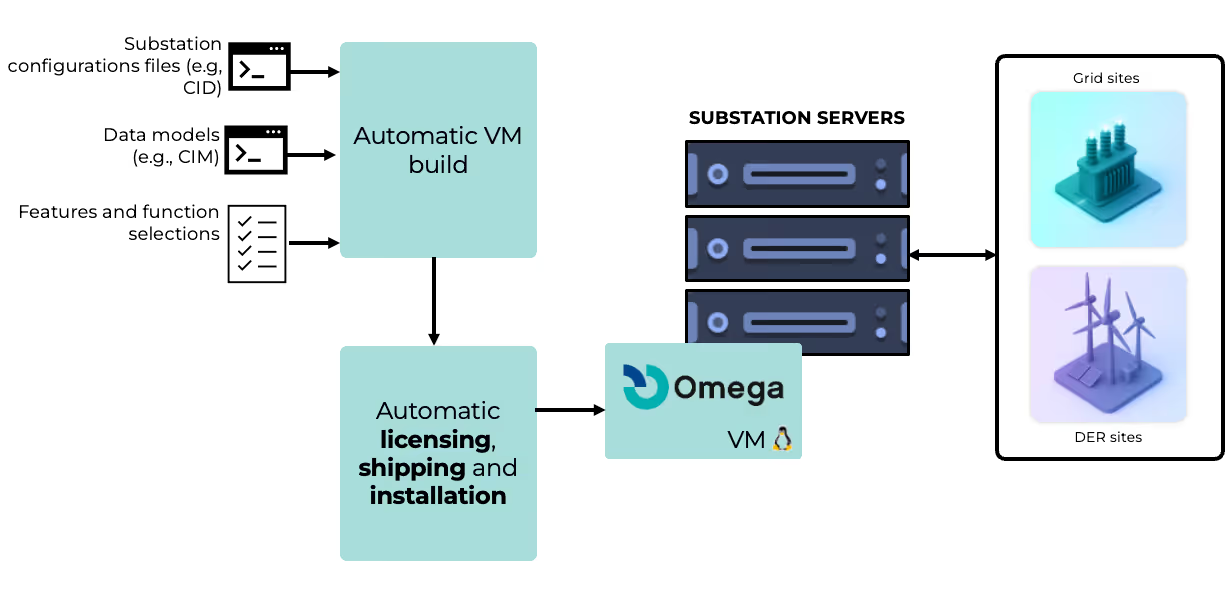

Industrialising Edge Intelligence – Automated VM Build and Deployment: A critical barrier to scaling virtualised applications across substations has not been performance—it has been lifecycle management. Manual configuration, on-site provisioning, licensing complexity, and environment-specific tuning have historically limited scalability. As illustrated in Figure 3, SMPnet has fully automated the virtual machine build, licensing, shipping, and deployment process. From configuration templates and CID mappings to automated image generation and remote installation, VM instances can now be provisioned across multiple substations within hours rather than weeks/months. Licensing mechanisms are aligned with modern hypervisor standards, binding application licenses to the unique UUID of each VM instance. This ensures portability, traceability, and cybersecurity compliance while maintaining deterministic execution environments. The result is a shift from “project-based deployment” to industrialised rollout—where applications can be replicated, scaled, and updated remotely across fleets of substations without physical intervention.

This advancement directly addresses the operational bottlenecks historically associated with hardware-based deployments: physical installation delays, complex commissioning cycles, and recurring site visits that can cost thousands per intervention. By transforming deployment into a software-defined workflow, virtualised edge applications become as scalable as modern cloud services—while still operating within real-time, mission-critical OT environments.

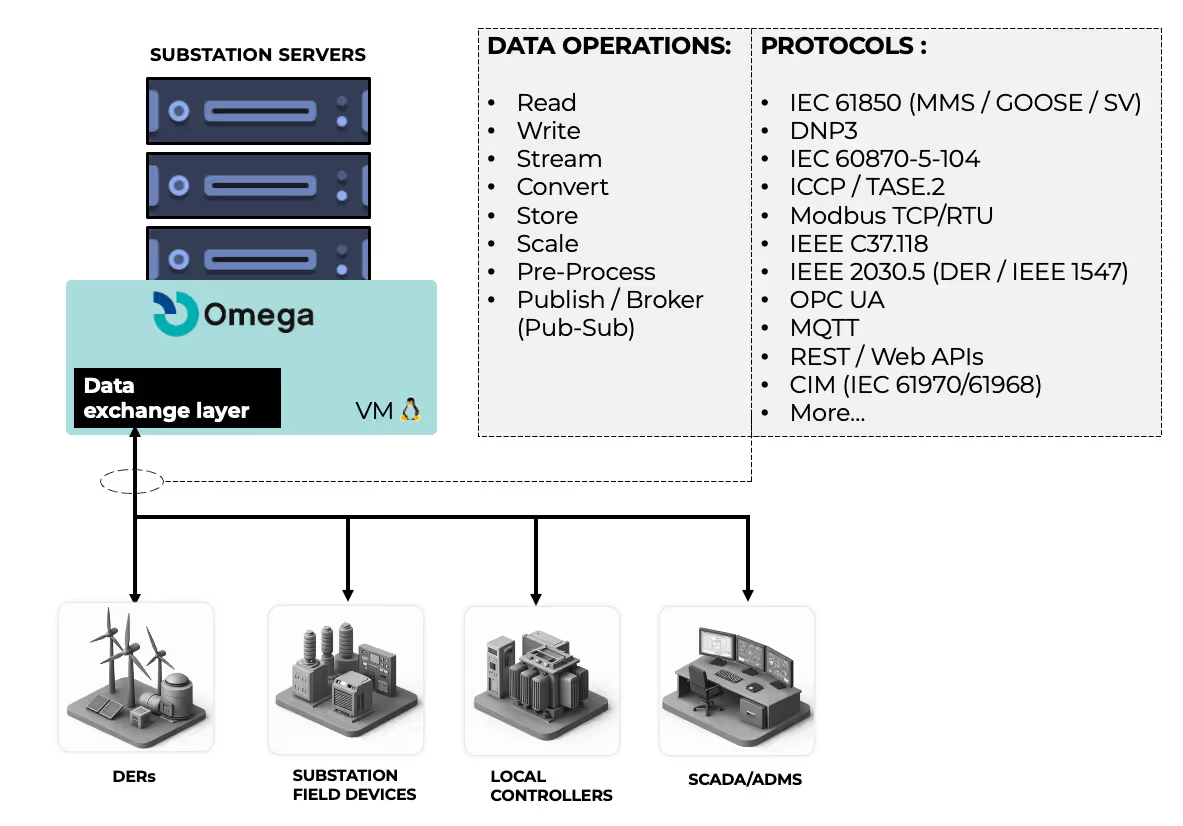

Virtualised Edge Data Layer – Interoperability Without Friction: If deployment scalability is the first challenge, interoperability is the second. Modern substations operate in multi-vendor ecosystems, integrating protection IEDs, DER controllers, PMUs, SCADA systems, and enterprise platforms—often across heterogeneous protocol stacks. As highlighted in earlier research, interoperability issues can account for up to 20% of total project time and cost, with industry-wide integration inefficiencies reaching billions annually (see Virtualisation in Power Grids article). To address this structural constraint, SMPnet has developed a virtualised edge data layer—illustrated in Figure 4—that abstracts protocol complexity into a unified operational fabric.

This layer does far more than simple read-and-write communication. It supports real-time streaming, event logging, scalable data ingestion, protocol translation, structured data modelling, and secure northbound and southbound integration across IEC 61850, DNP3, 104, Modbus, synchrophasor streams, DER protocols, and modern API-based interfaces. Crucially, it enables consistent data normalisation across DER sites, substations, and SCADA environments, ensuring that analytics, optimisation, and control applications operate on harmonised datasets rather than fragmented device-level signals.

Why is this important? Because advanced edge intelligence depends not only on compute power, but on coherent data access. Without deterministic, interoperable, and scalable data exchange, optimisation algorithms and automation workflows cannot function reliably. By virtualising the data fabric itself, the substation transitions from a protocol gateway to an intelligence hub—capable of orchestrating distributed assets with precision and resilience

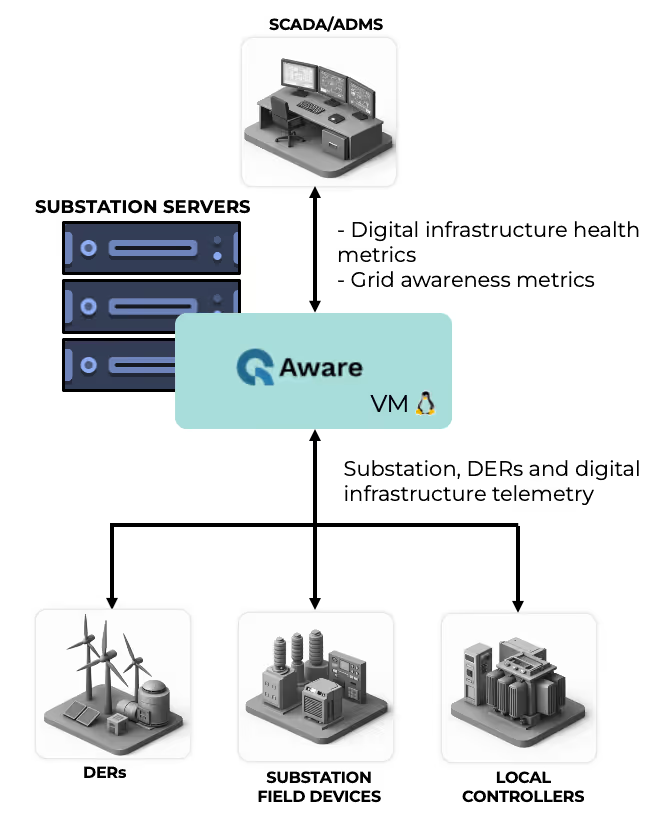

Operationalising Grid Awareness at the Edge: While deployment automation and interoperability provide the structural foundation, the true transformation begins when the edge starts generating intelligence. As illustrated in Figure 5, the Omega suite VM operationalises grid awareness by aggregating telemetry, events, infrastructure metrics, and DER data into a coherent, real-time awareness layer. This is not simply data collection; it is structured, contextualised visibility across substations, DER sites, and upstream control centres. Voltage profiles, asset loading, communication health, DER availability, and event streams are processed and correlated at the edge before being exposed northbound to SCADA, ADMS, or enterprise systems.

This architectural shift is critical. Traditionally, substations functioned as measurement forwarding points—telemetry was pushed upstream, and intelligence resided centrally. In high-DER environments, that model introduces latency, bandwidth strain, and fragmented situational awareness. By contrast, edge-based awareness enables pre-processing, anomaly detection, event correlation, and metric generation locally, reducing reaction time and improving resilience. Infrastructure health monitoring—CPU loads, communication integrity, packet performance—runs alongside grid metrics, ensuring that both electrical and digital layers are continuously supervised. The result is a substation that understands not only electrical behaviour but also its own operational integrity.

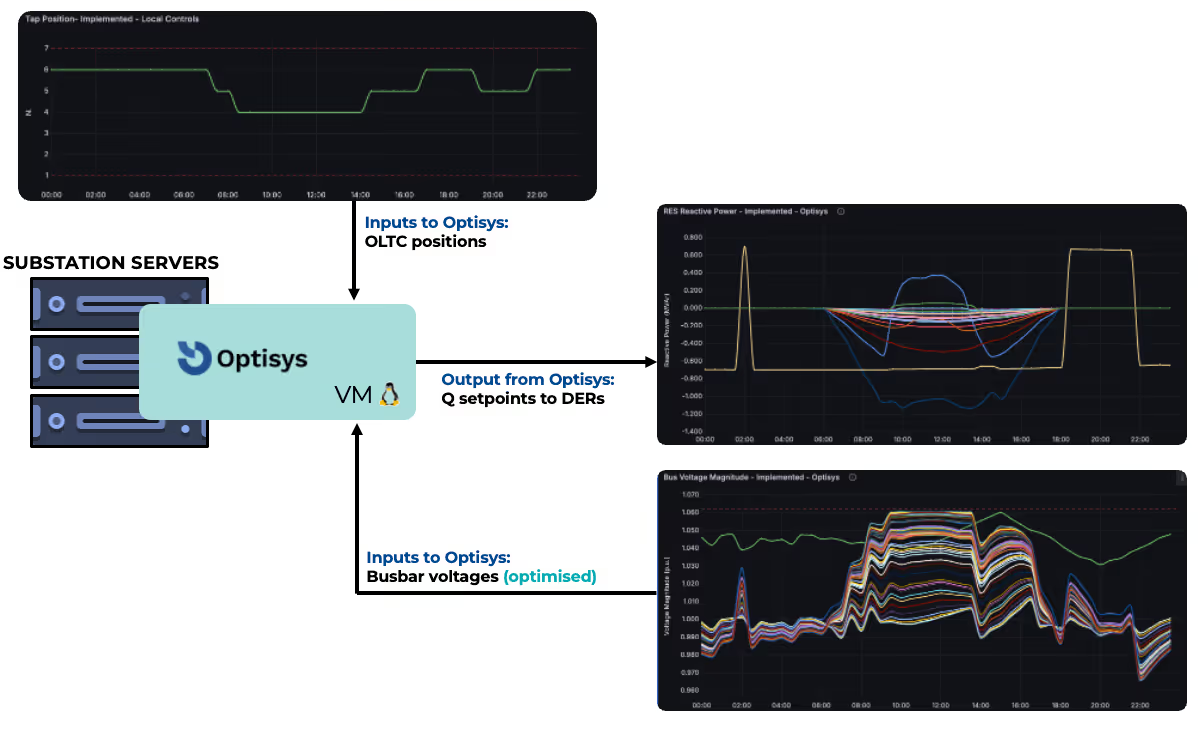

VM-Based Wide Area Voltage Optimisation – From Awareness to Action: Once awareness is established, optimisation becomes actionable. Figure 6 presents the VM-based deployment of Optisys, demonstrating wide-area voltage optimisation operating directly within a virtualised substation environment. This capability forms part of SMPnet’s deployment with UK Power Networks for wide-area grid optimisation (see: SMPnet Secures UK Power Networks Contract for Wide Area Grid Optimisation). Within this framework, voltage measurements, OLTC positions, reactive power flows, and DER capabilities are continuously analysed, and optimal setpoints are dispatched in closed loop to controllable assets. The system moves from observing voltage excursions to actively shaping voltage profiles across feeders and substations in operational environments.

The importance of running such optimisation engines at the edge cannot be overstated. Wide-area voltage optimisation in DER-dominated networks requires frequent recalculation cycles, deterministic execution, and reliable communication with multivendor assets. Virtualised deployment ensures that optimisation algorithms execute within controlled resource environments—leveraging CPU isolation, real-time scheduling, and hardened infrastructure consistent with modern substation hypervisor platforms. Setpoints for active and reactive power are issued dynamically, increasing voltage stability, managing congestions, and renewable hosting capacity without introducing centralised computation delays or communication bottlenecks.

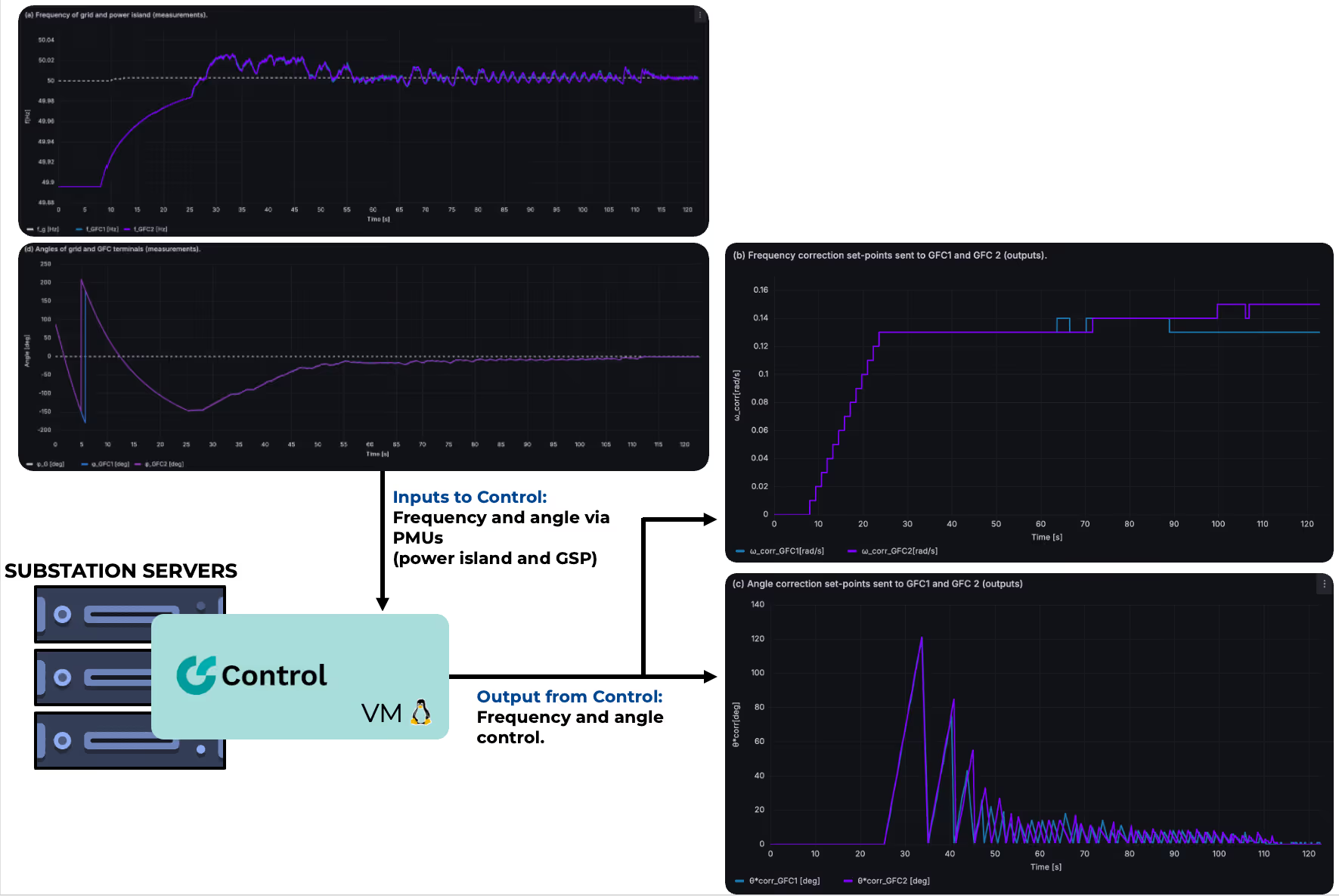

MV-Based Frequency and Angle Control for Power Island Operation and Re-Synchronisation: If voltage optimisation demonstrates closed-loop control, Figure 7 demonstrates something even more demanding: dynamic frequency and phase angle regulation during islanded operation and grid re-synchronisation. In this deployment, the Control VM operates directly on substation servers, interfacing with battery energy storage system (BESS) converters to regulate frequency deviations and manage phase angle alignment between a power island and the main grid.

The control application continuously processes PMU streams – i.e., frequency and angle measurements from both the islanded section and the grid supply point. During islanded operation, it dynamically adjusts active power injection from the BESS to stabilise frequency and damp oscillations. As reconnection conditions approach, the system performs controlled phase angle correction, issuing precise setpoints to converters to ensure synchronisation thresholds are met safely and deterministically. This is not supervisory logic; it is real-time dynamic control operating within millisecond response windows.

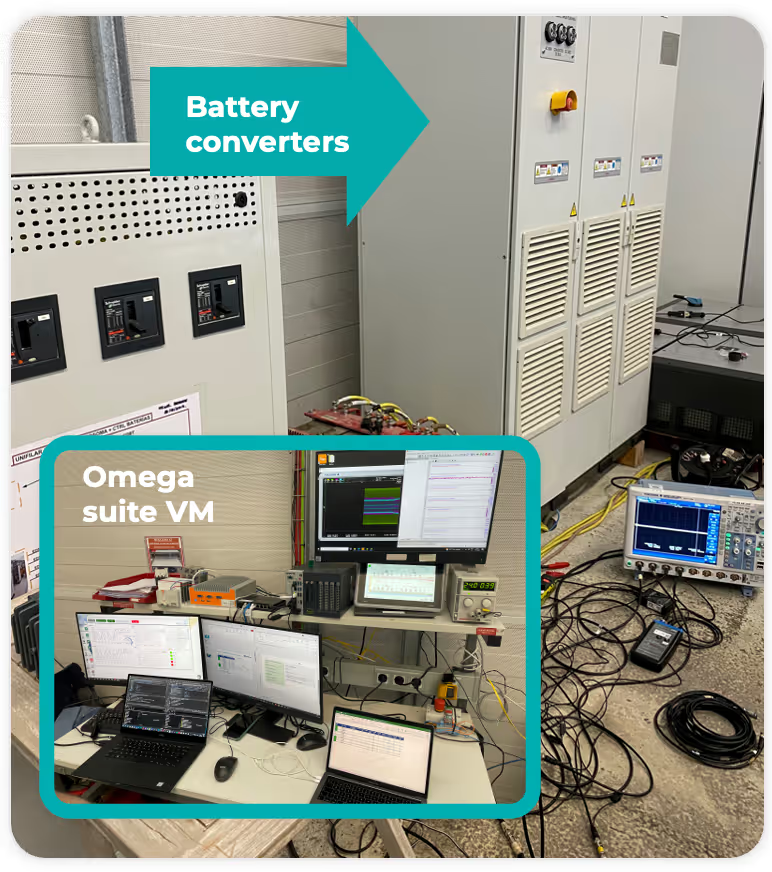

Figure 8 illustrates this setup in a live environment, where the Omega suite VM communicates directly with battery converters and measurement equipment.

This deployment is significant for one reason: it demonstrates that virtualisation is not limited to steady-state analytics or slow optimisation cycles. Even time-sensitive stability functions—traditionally confined to dedicated embedded hardware—can operate reliably within virtual machines at the substation edge. The same infrastructure that hosts protection and optimisation can also manage dynamic frequency and angle control.

At that point, the substation is no longer simply digital.

It becomes an intelligent, software-defined control node within a truly digital grid.

The Road Ahead - From Virtualised Substations to a Software-Defined Grid

Virtualised protection has proven that deterministic, software-based functions can operate reliably within substation environments. The infrastructure is validated, the hypervisors are mature, and the first wave of deployments is complete. The foundation is no longer in question.

The next step is expansion. Awareness, automation, optimisation, and real-time control can now operate on the same edge platform—transforming substations into intelligent, software-defined grid nodes. This is how we move from digital components to a truly digital grid: distributed, adaptive, and operationally intelligent.

If you would like to explore how these capabilities can be integrated into your own vPAC roadmap or digital substation strategy, we welcome the discussion.